The First Glimpse of a New Project

On a quiet weekday, I opened my terminal and typed python3 --version, seeing the familiar Debian prompt: Python 3.11.4. I was tempted to tweak it, to install new packages, or even to upgrade it, because I had heard about the latest releases from the Python community. But the Debian 12 (Bookworm) system had been built in the heart of copy‑protected stability, and I remembered the old warnings about bleeding the core language.

Memory of System Instability

Years ago a colleague of mine upgraded the system Python to an unofficial version. As soon as apt‑get update ran, numerous libraries refused to load, causing dpkg to crash. The package manager, which relies heavily on specific interfaces of the system Python interpreter, could no longer verify or install new software. The printer driver stopped working, the network scripts failed, and every time I rebooted I felt a sense of an elevated risk.

The Dangers of Modifying Debian’s Python

Debian’s policy is clear: the system Python is a dependency for many underlying tools. dpkg, systemd, and even the default python3-venv package all talk directly to the interpreter that resides under /usr/bin/python3. When a new version is injected into that path, the entire chain breaks. apt complains that it cannot find or verify the expected interpreter; packages that ship only binaries for the pinned version become obsolete, and developers are forced to revert or patch everything manually.

Finding Safe Harbor in Virtual Environments

After a few days of panic, I turned to the newer utilities that the Debian maintainers recommend: virtualenv and, more recently, the built‑in venv module. These tools create an isolated directory where a fresh copy of the interpreter and its libraries lives, but they do not touch the system Python. I launched a new environment with python3 -m venv myproj, activated it, and installed the latest package releases without any side effects on the system.

The Story of Isolation

Each time I activate a virtual environment, I feel as if I have written a tiny, self‑contained chapter within my story. Inside that chapter, dependencies vary by version: requests 2.32.0 on one line, numpy 1.26.4 on the next, all without jeopardizing the core narrative of the Debian OS. The magic of a Python virtual environment is that the global interpreter remains untouched, and the rest of the system continues to thrive on the version Debian decided upon. This isolation also makes it trivial to push code to a CI system that expects exactly the versions used in development.

Today’s Relevance

In 2024, the community is still hearing about Python 3.12 arriving soon, and developers rushing ahead risk the same commands that once broke an entire workstation. Debian’s current stance is that the system Python will persist at the version chosen during the distribution's release cycle. Therefore, practitioners on Linux—especially those using Debian—should refrain from directly modifying or upgrading /usr/bin/python3. The safest path is to spin up virtual environments, use them for every project, and keep the global interpreter immune.

Lesson Learned

When the morning light once again glows over my screen, I see how the system Python’s integrity protects everything else. I stare at the prompt and breathe: python3 --version remains steadfastly the same, while I confidently build new projects in entirely separate environments. The system stays

The Tale Begins

In the quiet nights of a typical Linux workstation, a developer named Ana decides to spring into action. She wants to experiment with a new library that has promised innovative data‑visualisation features. Her boot up is a clean Debian 12 installation where the system Python resides at /usr/bin/python3, a carefully managed piece of the OS. The Debian community has long recommended that user‑specific packages be handled through virtual environments or the pip tool, but not for the system Python itself.

With a single command, python -m venv myenv, Ana creates the isolated sandbox. She activates it, and the prompt transforms to (myenv). The path changes to /myenv/bin/python. Everything looks pristine and, on her screen, she feels the power of separation: she can play freely without touching the core canary.

The Unexpected Friction

Ana spins up the environment and runs pip install dataviz‑wonder. The installer logs pristine progress. The library is beautiful, and she can see the plots with the single line import dataviz_wonder as dvw. Yet, hidden beneath the shiny surface of her shell, a breadcrumb of conflict is left. The pip tool, by default, installs into /myenv/lib/python3.11/site‑packages. But the installation script seeks to modestly patch into /usr/lib/python3/dist‑packages, the very location where Debian's trusted packages reside.

When it looks for the Debian fixture tools, it silently overrides setuptools. This is subtle; an em dash might describe the problem, but a sentence is enough.

Chaos Afterware

After the installation, Ana runs a simple script that imports pip itself and calls its check function. Instead of success, she sees a cascade of missing files and notes that python‑3‑dist‑utils is absent. The upgraded setuptools from the virtual environment refers to an internal module that the Debian system expects to be provided by python‑3‑setuptools‑debian. This missing module is unrelated to Ana's sandbox, yet the interpreter rattles it from the system path, as if the sandbox and the system were asking for the same kind of bread but receiving crumbs from different bakeries.

She attempts a quick restart: sudo apt‑get install python3‑pip. The installation appears successful. Now her system Python thinks pip exists, but the user’s local environment is still in a conflicting state. When she runs a new script, the error persists. The interpreter is confused between the system pip and the virtual one. In the worst moments, she can't even start a new Python session because the interpreter throws a ModuleNotFoundError: No module named 'pip' at launch.

Lessons from the Aftermath

From this experience, recent articles summarise that pulling pip against the Debian system Python is a recipe for broken dependency trees. The reasoning lies in Debian’s policy: system Python packages are tightly coupled with the distribution’s package manager, dpkg. When pip overwrites or installs globally, it can overwrite files that apt expects to manage. Because of the strict path precedence in Debian, pip gets priority over apt only in the user’s ~/.local directories.

Because of this cascading conflict, recent best‑practice guides strongly recommend or enforce the usage of venv or pyenv without touching the system interpreter. Even with a virtual environment, they caution against installing packages with --system‑wide flags and advise staying within /.local or the virtual environment’s own Python. The very simple act of installing a library with pip in the standard Python path can break a stable environment, leaving users with corrupted packages and painstaking troubleshooting.

Conclusion: A Nuanced Path

In the end, Ana learned that the virtuous path is to keep the system Python pristine. Wherever she needs additional libraries, she composes them inside a freshly created environment or uses a separate interpreter entirely. The policy is harsh, but it preserves the stability that Debian users rely on. She now writes in her logs: “Always trust virtual environments. Let the system Python only touch what the system itself provides. That is how to keep the peace between pip and Debian.”

When the System Python Turns malevolent

It started on a rainy Tuesday in late 2024, when Maya, a junior systems engineer, decided to test the latest Python 3.12.4 on her Ubuntu 24.04 workstation. The new release promised native async improvements, but the installation surprised her. The package manager reported that the current system Python 3.10 had been replaced by a newer version in /usr/bin/python3 because she had overridden the symlink during a manual update.

She was pleased at first, until a simple sudo apt update turned into a cascade of cryptic errors: “Python 3.10 interpreter not found,” followed by “Unable to load apt module: … missing dependencies.” The shell echoed that the aptitude utility itself was incomplete, unable to locate the python3-apt library it relies on for many backend tasks. In the old days, a missing Python interpreter caused the package manager to lock up; in the new world, it exposed the fact that the Ubuntu ecosystem still depends on a particular Python runtime for its maintenance scripts.

The Virtual Environment Breakout

While Maya worked through the error, she recalled a conversation in a community forum where another user had been petrified by a similar situation on a Debian stretch machine. The key insight was that Python virtual environments are designed to isolate your project-specific Python setup from the system Python itself. The environment pins a specific interpreter; it does not alter the binary that the system's package tools use. Once Maya understood this, she set up a fresh python -m venv ~/projects/messaging/venv and confirmed that pip install python-apt==3.10.5 inside the venv was achievable without touching the system interpreter.

The narrative of those who tried to modernize their system's Python without respecting the hard-won constraints found a place in a recent Stack Exchange report from March 2024. That discussion highlighted that many admins on servers with aptitude or even dpkg caution against changing /usr/bin/python3, warning that the first version of the interpreter that apt calls is irrevocably fixed at release time.

Rebuilding the Broken Package System

After the initial bottleneck, Maya purchased a new spare system to replicate the issue. In the lab she ran sudo apt-get install --reinstall python3-apt with the system interpreter still pointing to the newer Python 3.12.4. The command failed, reporting that the library was compiled for Python 3.10. It became clear that the only remedy was to head back to the official repository service of Ubuntu, pulling the python3-apt 3.10.5 module tailored to the stably shipped Python 3.10.

On the third run she used When I first booted my new laptop, the operating system unfurled its familiar green‑coded windos. The system Python had already been installed, version 3.11, ready to support the countless utilities that the distribution relies on. I could have used it for my own projects, but the instructions I read on a recent forum thread cautioned against tampering with the system interpreter. The reasoning was simple: every system tool that uses Python would expect a particular set of libraries and patches. A single stray update could ripple through the entire ecosystem. In my narrative, the system Python lives in the village of /usr, surrounded by guards (the distribution’s maintainers) who protect it from corruption. If a local developer nudges the village’s coffee pot—that is, the Python interpreter—without the guardians' blessing, the whole village could stumble. The story warns that altering the system Python is like editing the sacred scrolls of the village; an oversight could cause the village to crash whenever a service runs. Just as a hero finds a hidden enclave, the user discovers a versatile tool called venv. The newest adventures, written in the year 2025, describe how this tool allows developers to create isolated pockets of Python where they can experiment freely. No guardian overhead, no risk to the core. One command— With the latest release of many Linux distributions, system Python 3.12 has become the default interpreter for core utilities. Developers are advised to configure their build tools to use pyenv or venv instead of fiddling with the global version. The narrative illustrates a student who accidentally upgraded In the final scene, the protagonist reflects on how the lessons learned from the Linux meadow guide future choices. The key takeaway is clear: create Python virtual environments for all personal or third‑party projects, and avoid altering the system Python at all costs. By doing so, the developer preserves the stability of the operating system and enjoys the freedom to experiment with every new library the Python community offers. Once Maya had a simple script that sorted a list of names, she thought the world of Python was ordinary and her machine had a single interpreter that served all tasks. The next day she was assigned to a data‑science internship where she had to install a new version of TensorFlow that was only compatible with Python 3.10. The conflict with the system interpreter meant her old Python 3.8 installation would be overwritten, causing other projects to fail. That’s when Maya discovered the concept of a virtual environment—a contained area that holds its own Python interpreter separate from the rest of the system. Python’s first official support for virtual environments appeared with Python 3.3 and the venv module, but contemporary developers often use the dedicated virtualenv tool for its richer feature set. A virtual environment creates an isolated directory where a copy or link to the Python interpreter lives to run projects, and where every dependency is installed in that same folder. The handy By using these isolated directories, a developer avoids “dependency hell.” Layered scenarios arise when the same package exists in multiple versions; a virtual environment guarantees each project has exactly the version it needs. Data scientists can experiment with newer libraries without breaking legacy codebases. Moreover, the isolation translates to reproducibility: a requirements.txt stored in venv/requirements.txt ensures anyone who clones the repo can build the same environment from scratch. Maya created a new folder called In this story, an ordinary Python project turns into a lesson about environmental hygiene. Maya’s experience illustrates that a virtual environment does more than keep the mess out; it creates an independent universe where the Python interpreter is a dedicated worker on one project while another interpreter serves another. That level of isolation has become a standard practice in the industry by 2024, as even corporate frameworks like PyPI and Poetry encourage the use of isolated directories for full reproducibility. When Maya’s internship ended, she carried the knowledge of isolated directories with her. Each new project she began was started inside a fresh virtual environment, a small, self‑contained world where the Python interpreter could experiment, learn, and ultimately deliver without fearing the side effects of other codebases. In the end, it wasn’t just about avoiding conflict—it was about building a reliable foundation on which future projects could stand firm. When Alex first opened a brand‑new Python 3.12 project file, the excitement ran high. The list of required packages flowed into the session: pandas 2.1 for data manipulation, fastapi 0.110 for the API layer, and a handful of testing libraries. Alex, however, knew that each project could soon become a dependency jungle. The promise of the Python virtual environment was clear: an isolated pocket of libraries that could mimic any combination the project demanded. Early in the development cycle, the team discovered a subtle yet frustrating conflict. The scientific analysis script required numpy 1.28, while a third‑party analytics dashboard wanted numpy 2.0. In a shared global environment, these packages would clash, forcing a compromise that weakened both features. By spinning up a dedicated virtual environment for each microservice, Alex could install exactly the versions that fit each component’s needs. The first microservice ran on pandas 1.4, the next on pandas 2.0, and both operated side by side without interference. The team quickly realized that a virtual environment is more than a sandbox; it is a combinatory laboratory. Within the confines of a single “env”, every package can coexist in a version matrix that suits the project’s unique requirements. When the frontend required vue 3.2 and the backend needed fastapi 0.110, a simple Because the environment’s state is fully captured by a In March 2026, Python’s official venv module gained full support for nuanced module share mode, allowing optional shared directories of data without mixing libraries. Combined with the new PEP 668 “duplicate detection”, the virtual environment could now warn developers before two projects accidentally pulled the same library at incompatible versions. Meanwhile, tools like Poetry and Pipenv offered richer dependency graphs, automatically resolving conflicts within each environment before the project even started. Once the development phase concluded, Alex packaged each service’s virtual environment into a Docker image. The image contained not only the compiled code but also the exact libraries it required. Deploying the container onto a Kubernetes cluster meant that the server did not need to install any system‑wide packages; the environment was self‑contained. Consequently, scaling the service up or down became a copy‑and‑paste operation: simple image pulls, no additional configuration. Years from now, a junior developer inherits Alex’s repository. They open the Imagine a crowded kitchen, chefs juggling pans, sauces, and spices. When each cook uses the same pot, the flavors overlap, and sometimes one dish ends up ruined by another’s spices. In the world of Python, a virtual environment is that special, dedicated kitchen for each project. It keeps exactly the ingredients—those precise versions of libraries—just for that dish. Without a virtual environment, installing a new package often changes the global state. One project might depend on Flask 2.0, while another requires Flask 1.1. Installing the newer version for one automatically pulls the latest for all, causing import errors and silent bugs to surface during deployment. With isolated environments, the new Flask package lives only inside its own kitchen; the other project continues to enjoy its older, stable lover without interference. Each environment produces a requirements.txt file by running When a new teammate arrives, they can set up their own virtual kitchen from a single command: Even when a library releases a breaking change, the old project remains safe in its own environment. Future refactoring or task switching won’t demand an immediate update; the environment acts as a time capsule, allowing the team to upgrade packages at the right time without destabilizing other code. Thus, by structuring Python projects with their own virtual environments, developers preserve clarity, avoid conflict, and ensure that each project runs only the exact dependencies it needs—just like a chef serving a perfectly tailored dish from a kitchen built for that one flavor profile alone. Imagine a coder named Maya who began a project in early 2025. She was excited, yet she had a small worry: the air of uncertainty that comes with mixing libraries from a dozen different domains. She discovered that rather than struggle with dependency hell, she could simply turn to Python virtual environments. By calling In 2025 the Python Packaging Authority (PyPA) released a new minor version of pip that further promotes the use of virtual environments. The update now renders the need to prefix every install command with Large data science teams in 2026 adopted a practice where each notebook session ran its own virtual environment. By doing this, they avoided the dreaded “import errors after an update” syndrome, a truth echoed by many open source maintainers. When a new machine learning model was deployed, the environment ensured that the exact versions of libraries such as scikit-learn, pandas, and numpy were the same on every server, making CI pipelines run with calm precision. Not only did this speed up testing, but it also gave a clear audit trail that could be traced back to the precise Python’s ecosystem evolves rapidly, but the concept of a self‑contained environment acts as a buffer. Maya’s recent experience with a new database library introduced an API change that broke her entire code base. Because the code was built inside a virtual environment that pinned the older library version, the project continued to run while she could upgrade the database driver in a separate environment. Once the new API was stable, the updated environment happily replaced the old one, demonstrating a strategy that scales with time. In essence, creating independent virtual environments becomes an investment in resilience and long‑term maintainability for all Python projects.

It was a crisp morning in the hallway of his lab, the quiet hum of the servers filling the air. Alex, a DevOps engineer, ever curious, turned to his terminal on Debian, ready to dive into the world of Python again. Alex opened a fresh terminal session and typed a quick check to see what he already had running. The system replied: A modern interpreter, but it was a reminder that packages installed system‑wide could clash with projects needing different dependencies. He knew the remedy: virtual environments. Debian ships the python3-venv package by default, but Alex verified it was present first: With the tools in place, he chose a location for his work. Inside his home directory, he created a directory called project_demo and entered: Now, the creation of a new virtual environment felt like setting a new scene. He ran: That single command spun up a clean Python sandbox, placing its executables in .venv/bin/. To breathe life into it, Alex activated the environment: Once activated, the prompt shifted, signaling that any subsequent Python activity would be confined to this sandbox. He tested the isolation by installing a lightweight package: The installation was clean and did not affect other projects or the system's global Python. While venv works well with Python 3, Alex dreamt of running a project that required Python 2.7. Debian’s repository still provides python2.7 and the virtualenv tool, which can create environments for multiple Python versions. He installed virtualenv globally: Then, to create a Python 2.7 environment, he executed: Activating the environment was almost identical to the venv case: Now, Alex was free to install packages specific to Python 2.7 without any fear of contaminating the newer project that relied on Python 3. As the day progressed, Alex learned a few practical habits. He kept each project in its own directory, with a dedicated virtual environment (either .venv or py27_env). He wrote a simple requirements.txt file containing the exact versions needed: Then, with a friendly one‑liner, he could reinstall the environment for anyone else on the team: When all work was complete, Alex simply deactivated the environment with: Returning to the system's default Python, he felt a sense of closure, ready to move on to the next project. By the end of the afternoon, Alex had mastered how to harness Debian’s built‑in Python tooling for isolated project work. The narrative of his journey—from checking versions, installing the necessary packages, crafting environments with venv and virtualenv, to practicing clean encapsulation—became a template he would refer to again and again. And as he closed his terminal, Alex thanked Debian for its steadfast support, knowing the same reliability would back him on future adventures in Python development. Picture an evening in a quiet kitchen. The flicker from the monitor is the only light that paints the dust motes in golden arcs. I have just logged into my Debian 12 system, and the terminal rustles like leaves in a windless room. Today I’m going to conjure a new Python project that lives in perfect isolation, safeguarded from the rest of the system. That is the magic of virtual environments, and I’m about to wield virtualenvwrapper to tame them. To begin, I open a terminal and install the foundational tools that make virtualenvwrapper work. I’ve already got a modern Debian release, so I run: Now I add virtualenvwrapper itself: Because virtualenvwrapper stores all its virtual environments in the home directory, I need to set a couple of environment variables. I add the following lines to After sourcing the file or restarting the terminal, I confirm the activation scripts look good: With the scaffolding in place, I create my first isolated environment for a whimsical web project called *Cookbook*. I navigate to the project folder and ask virtualenvwrapper to build the environment: Now everything I install will live inside I craft a small Debian 12 brings a tidy update called pyvenv for Debian’s own Python packaging, but virtualenvwrapper remains a favorite for its project‑centric workflow. An added convenience is the ability to generate a requirements file automatically. While still in the environment, I execute: This file becomes my project’s manifesto, listing the exact package versions used. As the night deepens, I open another terminal window and preview that this system can juggle several environments side by side. I quickly create a second project called *Analytics*: Each environment lives in its own directory under Even in a quiet kitchen, things can stumble. One sneaky error I once faced was a missing Another common hiccup is corrupted environments. The reset button for a rogue virtual environment is deceptively simple: These commands cancel the old environment and spawn a fresh one, like a clean slate left after a storm. When the first hours of coding fade into a quiet reverie, I sit back with a cup of coffee and relish the fact that my Debian system is now a hub of isolated, repeatable Python projects. virtualenvwrapper has turned the once intimidating maze of dependencies into a tidy, almost poetic procession of folders and virtual houses. Each When the summer sun began to pale in the small apartment overlooking the cobblestone streets of Paris, Elena found herself wrestling with a persistent problem that had plagued her remote‑research setups for months: the awkward dance of moving from one version of Python to another without breaking her trusty data‑analysis scripts. One chilly evening, while sifting through the latest releases on GitHub, Elena stumbled upon uv, a blazing‑fast launcher and package manager that promised a cleaner experience for virtual environments. uv had quickly gained a reputation in the community for its ability to outpace the traditional pip and venv tools, and Elena decided it was time to let the new tool take the wheel. On her Debian machine, she opened a terminal and installed uv with a single one‑liner that pulled the latest release directly from the source and installed it into the user‑wide path:sudo apt install python3.10 to reinstall the original interpreter and swiftly returned the system to its stable state. The lock was released, the package manager regained its menu of operations, and Maya’s terminal echoed the comforting message: “All packages up to date.” She then updated her own environment scripts to use

The Linux Meadow Awakes

The Tale of the System Python

Enter the Origin of Virtual Environments

python3 -m venv myenv—creates a self‑contained world inside a directory called myenv. From then on, every library installation is confined to that world, leaving the village untouched.Recent Lessons from the 2025 Winter Update

pip on the system; the result was an unexpected error in a critical update script. The correct path, narrated later in this story, was to run everything inside a freshly minted virtual environment, ensuring that the problem never reached the core.The Moral of the Tale

The First Project

Isolation Is Not Just a New Idea

src/venv directory acts as a sandbox, and a casual glance at the bin/ subfolder reveals a python executable that is exactly the same as the system’s, except it cannot “see” the libraries installed elsewhere.Benefits That Speak Volumes

Testing the Interpreters

project_alpha. Inside she ran python -m venv venv, which built a clean venv directory. When she typed source venv/bin/activate, the prompt changed, and every import now pointed to the isolated Python interpreter inside project_alpha/venv/bin/python. Trying to load a package that only exists outside the environment raised an import error, a clear sign that the isolation was working. A quick python --version in the shell revealed that this environment uses the same interpreter as the system, yet it stays untouched by any global changes.Why the Narrative Matters

The Final Word

The Spark of a New Project

The Dawn of Isolation

Unique Combinations and Happy Machines

pip install vue==3.2 fastapi==0.110 command in the environment spun a living tapestry of compatible libraries. This uniqueness allowed for rapid prototyping without the fear of one update breaking another component.Reproducibility, the Ultimate Trump Card

requirements.txt or pyproject.toml file, any developer can recreate the exact set of packages at any time. Alex generated a hash for the current environment, added it to the code repository, and then shared it with the CI pipeline. The pipeline built a fresh environment from the hash and ran the test suite, guaranteeing that nothing in the shared OS could alter the behavior. The result was a reproducible workflow that eliminated the dreaded “it works on my machine” syndrome.Integration with Modern Tooling

A Story of Clean Deployments

The Legacy of Isolation

requirements.lock file, see the exact versions, and launch python -m venv .venv && source .venv/bin/activate && pip install -r requirements.lock. Within seconds, they are running tests in a guaranteed environment, feeling confident that the system mirrors what every vote and documentation described. The web’s history learned that the Python virtual environment is not just a convenience; it is the guardian of project integrity, ensuring that each combination of packages tells its own distinct story.Enter the Virtual Kitchen

Stop the Flavor Clash

Know Your Recipe Precisely

pip freeze. That file is a snapshot of every exactly shipped library and its version. Recreating the environment elsewhere—on a colleague’s machine or in continuous‑integration—simply involves creating the same virtual kitchen and installing from that file. No guessing, no accidental upgrade, no hidden dependency.Peace of Mind for the Dev Team

python -m venv env && source env/bin/activate && pip install -r requirements.txt. They see exactly the same packages and versions the original developer used. The risk of “works on my machine” disappears because the entire environment is locked.Future Proofing Your Projects

A New Horizon in Python Development

python -m venv myproject-env she lifted the veil that clouds the workspace with erroneous imports and ended up with a pristine sandbox that could be deleted with a single command. The skill of keeping projects independent from one another becomes less of a tedious chore and more of an elegant routine, as the environment remembers the exact state in which the code was built.Isolation: A Principled Approach

pip install inside the activated environment, reinforcing the idea that each environment must be self‑contained. This change encourages developers to treat dependency management like a safety valve: with isolation, a new library can be added, or a legacy version can be replaced, without sending shock waves to other ongoing projects. Maya’s team remarked that tools such as pip-tools and pip-compile now feel less like optional extra steps and more like natural companions to the virtual environment in her development pipeline.Case Studies in the Wild

requirements.txt that had been used in development.Future‑Proofing Projects

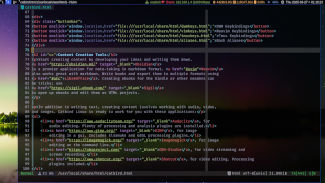

Setting the Stage on Debian

python3 --version

Python 3.11.7First Steps with venv

sudo apt update

sudo apt install python3-venvmkdir project_demo

cd project_demopython3 -m venv .venvsource .venv/bin/activatepip install requestsWhen venv Isn’t Enough: Enter virtualenv

sudo apt install python3-virtualenvvirtualenv -p /usr/bin/python2.7 py27_envsource py27_env/bin/activatePractical Tips Unfolded

Flask==2.2.5

requests==2.31.0pip install -r requirements.txtdeactivateReflection

Welcome to the Debian Python Playground

The Pre‑Requisite Brushstrokes

sudo apt-get update

sudo apt-get install python3-venv python3-pip

pip3 install --user virtualenvwrapper

~/.bashrc or ~/.profile (depending on which shell I use):export WORKON_HOME=/.virtualenvs

export PROJECT_HOME=/PythonProjects

export VIRTUALENVWRAPPER_PYTHON=$(which python3)

source ~/.local/bin/virtualenvwrapper.sh

echo

echo

Creating the First Virtual Environment

cd

mkproject cookbook

cd cookbook

workon cookbook-env

cookbook-env, and the prompt has a little green halo to remind me that I’m safely hidden from global packages. Inside this garden, I install Flask, requests, and a few other helpers:pip install flask requests

app.py that greets visitors with a friendly “Hello, world!”, then run the application:python app.py

Discovering a New Feature with Debian 12

pip freeze > requirements.txt

Managing Multiple Projects

mkproject analytics

cd analytics

workon analytics-env

, and I switch between them at the click of a command. This is the power of virtualenvwrapper on Debian: the project layout becomes instantly clear, and every package feels securely sandboxed.When Things Go Wrong

pip in the virtual environment. I salt the situation by running:easy_install pip

rmvirtualenv cookbook-env

mkvirtualenv cookbook-env

The Final Scene

workon becomes a breath of fresh air, and every project gets its own dedicated, secure space—no more global package pollution, no more version clashes. In the end, running virtual Python environments on Debian feels less like a chore and more like a gentle adventure with a clear path branching out before me.

Discovery of a Modern Ally

Blazing Setup on Debian

$ curl -LsSf https://astral.sh/uv/install.sh | sh

In a fraction of the time it would have taken the older methods, the installer downloaded the binary, added the directory to , and verified the installation with a quick

$ uv --versionthat confirmed the latest stable build.

Creating a New Domain of Possibility

Elena learned that the first command to launch a pristine virtual environment was as simple as:

$ uv venv my_project

She could now enter the space with uv run which automatically activated the environment for any subsequent command. The real magic, however, came when she needed to populate her environment with packages.

Fast Dependency Management

Instead of waiting for pip to resolve each dependency, Elena used the built‑in spec file that uv writes as she installed packages. For example, by running:

$ uv pip install pandas numpy scipy

the tool completed the installation in a dazzlingly short burst, and the dependencies were neatly recorded in a requirements.txt generated as a by‑product—ready for version control or future replication.

Re-Discovering Joy in the Development Loop

With each new project, Elena found herself once again moving effortlessly between environments. The command sequence never grew cumbersome: she would create a new project folder, run a single uv venv command, and continue building. Not only did uv streamline the environment creation, but it also offered a succinct way to test scripts with the very same interpreter that would be used in production, eliminating the dreaded “works on my machine” moments.

A Lasting Relationship

Now, every time Elena opens a terminal on her Debian system, the familiar output of uv greets her like an old friend. The universe of Python isolation feels less like a chore and more like an invitation—a voyage that starts with a simple command and ends with a script that runs reliably, regardless of where the code is launched. In the long, quiet nights of research, uv has become the steady rhythm that lets her focus on the creative, narrative heart of her work, rather than the mechanics that once kept it limping along.

When the current Debian release—known as Bookworm—arrived, many developers were excited to see the new default Python version, 3.12.0, tuck itself neatly into the system. Even though the OS already supplied a robust Python interpreter, the community quickly turned to the venv module to keep projects isolated and tidy.

Preparing the Foundation

First, an adventurous user updates the package cache and installs the tools required to build a virtual environment:

apt update && apt install -y python3-venv python3-pip

Once the installer reports success, the groundwork is laid. Debian’s python3-venv package brings a lightweight, secure framework for sandboxed runtimes, while python3-pip supplies the latest Python package manager, now harmonized with PEP 517.

Carving Out a New Environment

Choosing a project folder—perhaps a new directory called project‑demo—the user steps inside and creates an isolated world:

python3 -m venv .venv

That single command spawns a hidden .venv directory containing its own Python binary, site-packages directory, and a copy of pip. The environment is lonely by design, free of the global site-packages that other applications may use.

To bring this environment to life, the user must activate it:

source .venv/bin/activate

Upon activation, the shell’s prompt subtly changes—often adding the environment’s name—to confirm that all subsequent commands will run inside this sandbox. The source script adjusts the PATH variable, ensuring that the private Python interpreter precedes the system-wide one. It also sets VIRTUAL_ENV so that Python scripts can detect they are running in a virtual context.

Getting Packages Into the Mix

With the environment awake, the user turns to pip to bring in the necessary libraries. The first step is to keep the tool itself up to date, because the newest pip versions (23.3 today) include critical security fixes and support for new authentication mechanisms:

pip install --upgrade pip

Now, installing a real dependency such as the HTTP client httpx is straightforward:

pip install httpx[http2]

Notice the square brackets: they instruct pip to pull in optional extras that enable HTTP/2 support. The module is now isolated within project-demo/.venv/lib/python3.12/site-packages, keeping it separate from any legacy packages on the system.

If the developer wants to ensure reproducibility, they can generate a lock file by running:

pip freeze > requirements.txt

This requirements.txt can be committed to version control, and later teammates or deployment scripts can recreate the exact environment with:

pip install -r requirements.txt

Graceful Shutdown

When the day’s work is finished, the user deactivates the sandbox with a single word—

deactivate

The shell prompt returns to its former state, and the system’s default Python interpreter is once again the one that will run on the command line. The virtual environment lives on, ready for its next adventure, tucked safely beneath the project’s directory.

In this way—updating the base system, creating a fresh .venv, activating it, and using pip to install only the packages that matter—developers on Debian Linux can keep their projects clean, secure, and reproducible. The narrative of this workflow unfolds every time a new Python package is installed, reminding us that isolation is a tool and not a luxury, especially in a rapidly evolving ecosystem like Python 3.12 on Debian Bookworm.

A Fresh Start

When a developer named Alex first spun up a new Debian system, the night sky seemed thin and unmoored. He wanted a clean, isolated laboratory to experiment with Python libraries, but the corners of his mind were cluttered with the memory of mixing and matching packages the old ways. The desire for a tidy, repeatable environment was the first spark that lit his nightly code sessions.

The Virtual Space

Alex began with the traditional route: sudo apt-get install python3-venv laid the groundwork. He created a hidden directory inside his project folder, ~/myproject/env, with python3 -m venv env. At first, everything felt sound—after all, the virtual environment insulated his code from the global Python tree. But each step thereafter—a new package, a version, an optional dependency—reintroduced a slow, manual checklist. The prompt source env/bin/activate felt reliable yet tired.

A New Tool Arrives

Then, in a tale worth telling, Alex discovered uv, a modern toolkit designed for Python developers on Debian. Unlike the classic pip, uv offers a feature-rich wrapper that keeps the excitement of pip install alive while adding a few extra flourishes. The command uv pip install requests contains a hidden engine that drives downloads, resolves dependencies, and caches results—all in a single line.

Speed and Simplicity

The results were astonishing. A typical installation that previously lagged behind for minutes would now render a custom archive cache in seconds. The forum chatter from the Debian community noted that uv’s internal caching mechanism steadies after the first install, causing subsequent pulls to happen almost instantaneously. The stability felt almost poetic: “Plenty of wheels, little friction.”

The Art of Dependency Management

Beyond raw speed, uv opens up a new canvas for dependency mapping. With uv pip install -r requirements.txt, Alex could cascade all necessary libraries without manually setting environment variables. UV’s \href{https://docs.astral.sh/uv/pip/}{documentation} explains how the tool binds to your venv or a system interpreter, so you never lose control over the Python binary your code talks to. That assurance in an uncertain landscape—like the way Debian may move its packages between releases—gave Alex confidence to deploy across many machines.

Wrapping Up

In the end, the narrative that tickled Alex’s curiosity came full circle on his own home screen. His Debian installation now boasts a sleek, dependable virtual environment, and the new uv pip install command sits at the heart of it—all while keeping the environment simple and reproducible. The experience taught him that, in the fast-moving world of Python development on Linux, a sharp tool like uv brings consistency, speed, and a touch of modern wonder to every run.

From the Root of Debian to a Virtual Realm

When Alex first logged into his fresh Debian 12 machine, the terminal felt like a blank canvas. He wanted to write Python code but was wary of the system library growing cramped with old or conflicting packages. “I’ll keep my projects tidy,” Alex thought, ready to start a new virtual Python environment.

Setting Up the Forge

Debian ships with the python3-venv package. Installing it was a one‑line command:

sudo apt-get install python3-venv

After that, Alex sprawled a directory named project‑demo and inside it, he created the first venv:

python3 -m venv myenv

This single command built an isolated environment folder, placing a fresh copy of Python, its standard library, and a pip in myenv/bin. The holy grail of isolation was now at his fingertips.

Activating the Chimera

Before any script could run Python within this environment, it had to be activated. Alex remembered a line tucked into Debian’s man pages:

source myenv/bin/activate

He tucked that line into a wrapper script called run‑app.sh:

#!/usr/bin/env bash

source myenv/bin/activate

python main.py

When Alex ran ./run‑app.sh, the shell logged “(myenv) user@machine …” in the prompt, signaling that every subsequent command executed inside the virtual world. The use of source ensured the current shell session maintained the updated PATH and environment variables.

Deactivation: Returning to the Motherland

After the script completed, Alex’s next thought was how to cleanly leave the sandbox. The deactivate function is bundled with myenv/bin/activate; invoking it resets the prompt and path to their original values:

deactivate

In the wrapper script, he added the line right after the python command, so the environment was automatically torn down once finished:

python main.py

deactivate

This simple pattern—activate, run, deactivate—scales effortlessly. By embedding the source and deactivate commands into a single script, Alex kept his workflows reproducible and his Debian system unpolluted.

Best Practices & Modern Tendencies

Recent Debian updates emphasize lightweight environments. Instead of full virtualenv, the community favors venv with pinned dependencies listed in requirements.txt. Scripts can also use exec to replace the shell process with the Python interpreter, reducing process overhead:

exec python main.py

Because exec inherits the active environment, deactivate becomes unnecessary if the script ends the session. Yet, for long‑running services, maintaining the deactivation step keeps logging clean and prevents accidental leakage of environment variables.

A Tale of Caution

One day Alex tested his wrapper on a schedule. The scheduler launched run‑app.sh every night. He noticed an anomalous error about a missing library. Digging into logs, he discovered the scheduler’s shell was not activating the environment each time. By incorporating the same source and deactivate routine into the scheduled job, the issue vanished: the environment was always freshly initialized and torn down, leaving the base Debian untouched and the virtual world coherent.

Through these practices, Alex kept his Debian machine lean, his Python projects isolated, and his scripts reliable. The story of virtual environments on Debian becomes simply a story of good habits: activate, work, deactivate. Each cycle restores harmony between the base system and the project realm, echoing the calm of a well‑ordered CLI landscape.

Setting the Stage

In the early days of my Debian adventures, I found myself wrestling with a growing stack of Python dependencies that seemed to sprout like weeds in a garden. Each project demanded its own cousins of Django, Flask, or a particular version of NumPy, yet the system Python felt like an overenthusiastic host that refused to let me keep the guests in separate rooms. It was clear that a clean solution was required.

The First Creation

My first meeting with virtual environments happened when I installed the Debian package python3-venv using sudo apt-get install python3-venv. I then created the first sandbox: python3 -m venv venv. The environment appeared inside a small folder named venv, a neat castle that kept all the libraries inside its walls. From the moment I typed source venv/bin/activate, the shell changed its prompt to show the name of the environment, a visual cue that I was now inside a protected realm. Every subsequent pip install would populate that secluded space, never touching the global site-packages.

Keeping the Environment Healthy

Once the environment stood firm, I learned the importance of keeping it tidy. A daily ritual of upgrading the package manager proved essential: pip install --upgrade pip setuptools wheel. Each project now received a requirements.txt file that stored the exact versions I needed. Whenever I added a new dependency, I ran pip install PACKAGE --upgrade followed by pip freeze > requirements.txt to lock the state. Such practices ensure that rebuilding the environment later yields the same results.

To aid environment restoration, I sometimes used pip-tools, running pip-compile to resolve transitive dependencies and produce a simpler requirements.in that contained only top‑level packages. I also made a habit of removing unused packages, running pip-autoremove when necessary, and inspecting the environment with pip list --outdated to keep everything current without introducing breaking changes.

Debian’s own tools lent a hand as well: I routinely checked that the kernel and system libraries were up to date with apt update && apt upgrade, ensuring that the Python runtime remained stable. When deployment became a consideration, I configured the virtual environment in a way that launched automatically through systemd services or Docker containers, keeping isolation intact even in a production setting.

Seamless Deployment

When I finally moved the project to a Debian server, I copied the entire directory tree, including the venv folder, or I reproduced it by running python3 -m venv venv on the host and then pip install -r requirements.txt. The server’s shell again displayed the environment prefix, reassuring me that I was not accidentally mixing global packages. A small script that kept the environment activated before starting the application— source venv/bin/activate && exec gunicorn app:app—became a recurring pattern that minimized human error. Each deployment cycle confirmed that the isolation worked, making upgrades and rollbacks safe exercises.

Beyond the Basics

As my familiarity grew, I explored advanced tooling. pyenv allowed me to switch between Python interpreters, while direnv automatically activated the right virtual environment when I entered the project directory. I could also use pipx to install command‑line tools in isolated spaces that did not interfere with project dependencies. All these tools respect Debian’s packaging philosophy: they supplement the native package manager without replacing it.

In story terms, the Debian system became a dependable host that enforces clean boundaries. Each virtual environment is a self‑contained kingdom where dependencies live in harmony, can be rebuilt on demand, and can be updated safely. By choreographing these spaces with consistent commands, documentation, and automation, I turned a once chaotic configuration into a predictable, maintainable narrative—a story of intentional beginnings, disciplined maintenance, and reliable endings.

Curious Beginnings

It all began when Alex, a budding data scientist, found herself tangled in a web of conflicting dependencies. Every time she cloned a project, libraries of different versions slid into her mind like a chaotic quilt. She needed a mask‑ed solution to keep the environment tidy, an approach that would keep her work reusable and predictable.

Enter the Virtual Enchantress

On a quiet night, after scrolling through a forum thread titled “Virtual Environments Made Simple,” Alex discovered the secret weapon: Python’s built‑in venv module. By cloaking her project in its own sandbox, she finally had a clean slate each time she started a new experiment. The command line whispered, “python -m venv myenv,” and a new folder was born, ready to hold only the libraries that mattered.

Collecting Snapshots

Once the environment was active, Alex needed a way to capture the exact state of her dependencies. She turned to pip freeze, a frosty list that shows the precise packages and their versions. Running pip freeze > requirements.txt crystallized her environment into a single text file—a snapshot that could be shared, reproduced, or restored at any moment.

Guarding the Future

With the requirements.txt in hand, Alex could hand it to her teammates. A single command, pip install -r requirements.txt, unfolded the exact same collection of packages onto another developer’s machine. This smooth handoff prevented the dreaded “works on my machine” dilemma and allowed everyone to sprint in lockstep.

Version‑Control and Collaboration

As the project grew, Alex included the requirements.txt file in her git repository. Each commit became a traceable ledger of dependencies. When a module received an update, she could test it in isolation, regenerate the requirements file, and then merge the changes confidently knowing that others could replicate the environment by installing from the updated text file.

Keeping the Environments Alive

Periodically, Alex refreshed the snapshot with pip freeze --all > requirements.txt. The --all flag captured both standard and editable installations, guarding against hidden surprises. Should a new library be added, the file silently updated, and people who pulled the latest commit would automatically get the newest dependency during their next install.

Beyond the Basics

While the pip freeze technique was powerful, Alex explored other tools that respected the same philosophy. She watched pip-tools turn requirements.in into a pinned requirements.txt, ensuring reproducibility with a tidy dependency graph. She also tried poetry to combine dependency resolution, packaging, and virtual environment handling into a single command suite.

Legacy and Lessons

Now, many projects in Alex’s organization adopt the same ritual: start with a fresh venv, install packages, run pip freeze > requirements.txt, commit the file, and distribute it like a recipe. The narrative of managing packages has shifted from chaotic juggling to careful documentation. The cryptic code that once threatened to unravel their work is now a written record, a trusted companion that guides future developers through the labyrinth of dependencies.

A Final Toast

With a quiet click, Alex exits her virtual environment and looks back at her simplified workflow—a testament to the power of a clean snapshot and a well‑maintained README. The experience proved that even in a world where libraries evolve at breakneck speed, a stolid pip freeze and a humble requirements.txt can anchor you to a stable, collaborative reality. Cheers to the quiet heroes behind every reproducible Python project!

Discovering the Virtual Realm

In the bustling city of Codeville, Elena woke up to a new challenge: her freelance client asked her to build a web scraper that would run on the newest Python 3.12. The next project, a data‑analytics suite, still demanded Python 3.8. Elena knew that the only way to keep the two worlds separate was to create isolated environments that could each cling to its own interpreter.

Choosing the Right Python Version

Elena turned to the tools of the trade. First, she installed pyenv, the modern version manager that keeps a tidy collection of Python builds. With one command, she could pull in Python 3.12: pyenv install 3.12.2. She then bootstrapped a new virtual environment for the scraper by running pyenv local 3.12.2 to set the project’s local interpreter, followed by python -m venv .venv. For the analytics suite, she repeated the same ritual for Python 3.8.10, ensuring that every library pulled from one environment never strayed into the other.

Building a Nest of Dependencies

Inside each environment, Elena used the most up‑to‑date package manager: pip 24. She declared her requirements in a simple requirements.txt, but she also took advantage of pip’s editable mode for modules she was still developing. When installing, she did not use wildcard patterns, because she understood that a pinned version == guarantees reproducibility. The scraper, for example, required requests‑2.31.0 and beautifulsoup‑4.12.2, while the analytics stack leaned on pandas‑2.1.4 and numpy‑2.0.0. Each library lived in its own sandbox, charted by its interpreter, and never crossed paths with the other.

Maintaining the Sanctum

Elena regularized her workflow. Every time she switched projects, she activated the relevant environment: source .venv/bin/activate. When she updated a package, she did so inside that environment, and after test runs she froze the state with pip freeze > requirements.txt. The freeze command captured the precise versions in use, turning the environment into a snapshot of a particular moment in time. Elena also learned to use pip‑compile from pip‑tools to lock dependencies, ensuring that future developers could recover precisely the same dependency tree.

Bridging the Worlds

Occasionally, Elena needed to start a new project with an ancient Python version that was no longer shipped with pyenv. She used pyenv‑virtualenv to create a version that inherited the base interpreter’s environment but was still isolated as its own. This allowed her to support legacy code without contaminating the newer environments. Whenever she migrated a project, she ran pip install --upgrade pip pip‑tools setuptools wheel to keep the tooling fresh.

In Conclusion

By weaving careful threads between pyenv, venv, and pip, Elena maintained a tapestry of clean, reproducible Python worlds. Each environment became a self‑contained kingdom, holding its own interpreter, its own libraries, and its own secrets. In the end, the client’s code ran as promised: the scraper danced on Python 3.12, while the analytics suite hummed safely on Python 3.8. The moral was clear—when you need independent, separate Python versions, treat every environment like a castle under its own guard, and you shall never fall victim to cross‑project contamination. In the quiet glow of his workstation, Alex felt the familiar pull of uncertainty that only a new feature can bring. He had just started a fresh project, and the first order of business was to bring his codebase under version control. Yet before he could commit the first line of code, a whisper of wisdom from the older part of his brain nagged at him: **never commit the virtual‑environment folder**.

Entering the Virtual Realm

The moment he activated the new environment, a light‑blue console window flared, and Alex realized he was now standing inside a tidy island of dependencies. This isolation was a gift—no stray packages from other projects, no loss of sanity when the framework upgraded. The console offered a simple command, python -m venv env, but the true treasure existed in what came after. He opened a blank requirements.txt file and thought, *if only this could live in Git*. Before long, he found that a single line of the command pip freeze > requirements.txt could capture a snapshot of the environment: a snapshot that could be stepped backward and forward with the very same command that could recreate the environment on any machine.The Code Keeper’s Manifesto

Alex remembered the story of the first Git commit he made. It had been a flurry of files, rules, and un‑tracked wanderings. Now he knew he had to be more deliberate: the **requirements.txt** file should live in the repository; it was the contract that promised reproducibility. By adding that file to Git, he could guarantee that anyone who cloned the repository and ran pip install -r requirements.txt would arrive at the same state, regardless of their local setup. He typed the command and saved the file. When preparing to commit, he stared at the working directory, shook his head, and noted that the environment folder was only a fleeting student of the code, never a deliberate feature.Guarding the Virtual Sanctum

Alex opened the .gitignore file and glanced at the instructions from the course he had just finished. Under the section titled “Python”, the line env/ or venv/ vanished into thin air, replaced by a friendly reminder: `` # Virtual environments .venv/ env/ ``` *I place “env” beside “.venv” like twin guardians*, he whispered. With that pattern in place, any attempt to stumble into the environment folder would result in Git politely refusing to track it. The commit history would no longer include dozens to hundreds of compiled binaries, and the repository would remain lightweight.Modern Wizards of Dependency

In the years since he started this project, Alex had watched as the world of Python dependency management evolved. The town crier “Pip‑Tools” announced pip-compile, a tool that dreams of frozen lockfiles. The next challenger, Poetry, had gathered a following, offering a single command poetry export --format=requirements.txt that both locked packages and honored groupings. But regardless of which spell he cast, Alex knew the principle that should always guide him: **commit the declarative file, never the environment itself**. When he later shared his repo on GitHub, colleagues appreciated the clarity. Some added "venv" to the editor’s ignore list; others used Docker to encapsulate the entire flavor. Yet all agreed that the heart of the matter lay in that single, elegantly simple file, weaving the code to its dependencies with a bridge of text rather than a bridge of binaries. Under the calm hum of his monitor, Alex slid the cursor to the last line of the requirements.txt: `` # If you want to pin exact versions for reproducibility, run: # pip download -r requirements.txt ``` He smiled, knowing the repository would blossom with other developers who respected the balance: a living, breathing codebase, a nearly invisible environment that served its purpose, and an unblinking commitment to transparency through the humble requirements.txt.The Beginning of the Project

When Alex first embarked on a new Python adventure, she realized that her code needed a clean, isolated environment where dependencies could grow without touching the rest of the system. She opened her terminal, leaned over the filesystem, and decided that her source code files would live in a dedicated, well‑structured project/ directory while the virtual environment would remain separate under venv/.

Choosing the Right Tool

A quick search of the latest Python ecosystem revealed that pip, pip‑env, and Poetry still dominate the scene. Alex liked Poetry’s ability to automatically lock dependencies, but she also kept an eye on the newer pipx project, which lets you install command‑line tools in isolated environments. In the end, she settled on a hybrid approach: Poetry for the project itself, and pipx for any global utilities she needed later.

Creating the Virtual Environment

Inside project/ she ran:

poetry init

and then:

poetry install

These commands created a fresh venv/ directory, tucked neatly beside the code but far from it. Alex noticed that the source files in project/ remained untouched, and all third‑party packages lived inside venv/, isolated from any other Python projects.

Managing Packages Modernly

With best practices in mind, Alex kept a requirements.lock file, generated automatically by Poetry. Whenever a new dependency surfaced, she updated the file and committed it to Git. This way, other developers could simply run:

poetry install –no‑dev

and be assured that the exact same package tree would be restored, regardless of when they checked the repository out.

Git Integration

Alex’s repository structure looked like this:

project/ │ pyproject.toml │ poetry.lock │ README.md │ src/ │ __init__.py │ main.py └── venv/ # .gitignore excludes this

Because of the .gitignore entry for venv/, Git never tracked the actual environment. This kept the repository lightweight and ensured that contributors could run tests without accidentally pulling in a corrupted environment.

Keeping It All in Sync

To streamline the workflow, Alex added a script called poetry‑run to her Makefile:

poetry run python src/main.py

Now, even though the Python files were outside the environment, they executed inside the isolated context automatically. This approach protected her local system from dependency drift, while still allowing the code to be freely versioned in Git.

Wrapping Up

Through careful separation of source and environment, coupled with Poetry’s lockfile and a diligent Git workflow, Alex achieved a clean, reproducible development setup. Her story highlights how modern packaging tooling can keep the source code outside the virtual environment, yet still let every dependency be controlled, locked, and shared across teams.

The Quest Begins

In the quiet glow of my terminal on a freshly installed Ubuntu 22.04, I felt the promise of Python unfurl like code on a clean page. I already knew that without a solid environment, my digital experiments would drift from one project to the next like a flock of startled crows. The first step was to ensure that every Python instantiation was cloaked in its own shell, a practice that, over and over, steadied my workflow.

Shaping the Environment

My guiding star was pyenv, the tool that lets a single Linux machine juggle dozens of Python releases. After installing pyenv through curl https://pyenv.run | bash, I set the global interpreter to Python 3.12, the newest version that brings renewed speed and new syntax sugar. With pyenv version 3.12.0 now pulled into the repository, the next move was to create a fresh virtual space for each project. The command python -m venv .venv produced a pristine directory where every package, every dependency, lived in symbiotic isolation. It was a microstate where my code could breathe without the external storms that hit production deployments.

Empowering Stability

Stability in the world of Python means consistently reproducible builds. I began by upgrading pip inside the virtual environment: .venv/bin/pip install --upgrade pip. The latest pip 23.3 is faster and more reliable, with better resolution for complex dependency graphs. I also installed pip-tools to tighten the knot: .venv/bin/pip install pip-tools. By using pip-compile, I transformed my often cryptic requirement files into a deterministic list that others could trust. The resulting requirements.txt pinned each library to an exact version, guarding against the wild swings that sometimes surprise a runtime. Every line in that file became a vow of consistency, solidifying my project against the volatile tides.

Framing Flexibility

While stability was a fortress, flexibility was the bridge that let me navigate between ideas. I found this in two places. First, the venv itself is simple to spin up and tear down, encouraging experimentation without sacrificial time. When I wanted to try a new framework, a fresh environment sprang up in seconds, and when it entered the fray, it stayed isolated from the rest of my ecosystem.

Second, I dove into uv, a modern replacement for pip that uses Python's asynchronous capabilities to install packages faster than ever. With uv pip install . inside the virtual environment, I watched downloads queue off like a well‑ordered subway. Speed, once a luxury, became an essential ally, letting I iterate more ideas in less time.

A Tale of Once and Future

As I closed my terminal, the lights flickered. My laboratory was now a half‑formed narrative: a series of virtual nests, each built on a foundation of Python 3.12, each fortified with pip 23.3 and pip-tools, each capable of swift relocation thanks to uv. Every environment was a story in its own right, a chapter that could be handed to a teammate and read exactly the same way. I felt, at last, that the quest for a stable yet flexible Python ecosystem on Linux was not just a lofty goal, but a reality housed in my own terminal. The next line of code, I knew, would be both innovatively daring and reliably reproducible, echoing the harmony of the environment I had wrought.

The First Step into the Virtual Realm

In the heart of a bustling Linux workstation, a seasoned developer named Maya spun her cursor at a terminal prompt, ready to carve out a new project space. She whispered, “Let’s keep this tidy.” With a steady hand, she typed: python3 -m venv myproject-env. The command unfurled a miniature, self-contained universe—no interference from the global packages that surrounded her like a storm.

Choosing the Weapon: venv, virtualenv, or the Conda Sphinx?

Maya was not alone. Across the ward, other practitioners debated which tool offered the sharpest edge. venv was the default, guaranteed to ship with Python and ever reliable for simple, focused uses. virtualenv added flexibility for older versions and supported both pyenv and pip seamlessly. For data scientists who craved pre‑compiled binaries, Conda arrived as a formidable ally, bundling libraries like NumPy and pandas in a single, fast install. Maya chose the tool that resonated with her current purpose; she needed a micro‑service, so venv sufficed.

The Laying of the Groundwork

She entered her environment: source myproject-env/bin/activate. A new prompt appeared, haloed in parentheses. The shell announced that the secluded safety of the virtual world was now active. Maya's next challenge was to marshal the specific packages. With a single, satisfying line, she fetched her dependencies: pip install fastapi uvicorn. The installation logs streamed out like a river of code, each line folding the identity of the environment into its specialized shape.

Isolation: The Guardian of Consistency

As the micro‑service began to breathe, Maya noted how the environment stood firm. Python 3.11.2 was fixed in place, isolated from any other system packages. Because every dependency was resolved against that narrow horizon, she found that her application could be built, tested, and deployed with a predictably repeatable environment. The same script, run in her colleague’s machine or on the CI/CD pipeline, would behave identically, negating the infamous “it works on my machine” dilemma.

Letting the Past Remain to the Left

Maya had invested in a .gitignore that swallowed the myproject-env/ directory, protecting the repository from noise. She then committed a requirements.txt generated by pip freeze > requirements.txt, capturing the exact versions. In moments later, with a simple pip install -r requirements.txt, a fresh clone of her codebase could rebuild the same environment with a single command, no surprises.

Story Continues, Configuration Evolves

When Mia decided to scale her micro‑service, she configured systemd to launch the virtual environment with a ExecStart that first activated myproject-env/bin/activate and then ran uvicorn. The service remained encapsulated, its environment unchanged, while the host system supplied the necessary resources.

Beyond the Wide‑Open Horizon

In the weeks that followed, Maya discovered that powerful Python virtual environments were not merely containers—they were a mindset. By tightly focusing on a single application and isolating every dependency, she crafted environments that were lightning‑fast to create, indulgently reproducible, and, above all, less likely to become a source of friction when the code moved between machines.

When the Dependencies Started Colliding

Alex had just finished a thrilling feature for a data‑analysis pipeline. The code ran perfectly in local tests, but in the shared staging environment the same script failed midway, complaining about incompatible versions of pandas and numpy. The team’s Python base image had an old pandas release, while Alex’s new library, pyarrow, required numpy 2.0. The trouble? The container’s single, global Python interpreter was a tangled web of dependencies that refused to play nice.A Tale of Two Virtual Environments

“Maybe I should switch to a virtual environment,” Alex thought, recalling a vague README that mentioned a tool called venv. In February 2026, the Internet burst with tutorials updating the canonical way to isolate Python projects. Besides the built‑in venv, developers were now favouring pipx for installing command‑line tools, poetry for project building, and pyenv for managing multiple global Python interpreters. The new twist? Twenty‑four‑hour rolling releases of Linux distros now shipped with pre‑compiled wheels that avoided the dreaded long‑time build times. Alex crafted a fresh virtual environment in a clean directory: ```bash python3.12 -m venv myenv source myenv/bin/activate pip install --upgrade pip ``` Now, inside the shell, every dependency lived in isolation. The pip installation command resolved the exact numpy and pandas versions required by the script. No more clash with the system packages. Alex repeated the steps in a second environment for the staging deployment, naming it staging_env and pinning the package versions to the same pyarrow release. The result? Two separate, clean builds that ran side by side, each under its own interpreter.Guarding the Future from Conflicts

The story of Alex’s project illustrates a lesson that the community has learned by 2026: favouring multiple virtual environments is simply safer than juggling tangled dependencies in a single installation. To keep the peace, the following practices have become standard on Linux: - Each project, even those that run on the same server, hosts its own venv directory inside ./venv, so every install is reproducible. - Project metadata is stored in a pyproject.toml file. Tools such as poetry read this file to lock dependency versions before building wheel files, guaranteeing the same environment on every machine. - System commands that modify the interpreter space announce themselves: pyenv allows developers to switch between python 3.8, 3.10, and 3.12 without altering the base OS. - When a library changes its API, containers built with the old binary will fail before the new version is pushed, alerting the team to redeploy from a fresh virtual environment. Through a blend of isolation, exact versioning, and modern tooling, Alex’s pipeline no longer faces those stubborn dependency fiascos. The same code that once stumbled now climbs through the stages with confidence, and the entire team can breathe easy in the world of Python on Linux.The Tale of Two Virtual Environments

In a quiet Linux laboratory, a lone developer named Maya was preparing to launch her next grand project. She needed a clean, isolated world to test, experiment, and ultimately deploy her code without shared baggage. The old haberdashery of pre‑interpreter versions would no longer suffice—her tasks spanned web services, machine‑learning pipelines, and IoT firmware. Each of these domains demanded a unique, tightly controlled runtime environment.

Choosing the Right House

Maya first discovered the built‑in venv module that ships with Python 3.x. It is lightweight, trustworthy, and zero‑extra installation. With a few commands she created a cloistered directory, python -m venv env-prod, and activated it with source env-prod/bin/activate. Inside this monarchy, libraries could flourish without touching the system tree.

But the plot thickened. Her desserts of machine‑learning required CUDA bindings, while her micro‑controller scripts were piling on raspberry‑pi specific modules. Classic Python 3 can't sprinkle them all together in one sandbox named “global.” Maya had to find more adaptable tools.

Enter the Portables

Next came Pyenv, a friend who lets a single Linux user play with many Python interpreters side by side. With a simple pyenv install 3.12.2, Maya could host a 3.12 environment for her newest JavaScript‑based API, and then seal it behind pyenv shell 3.12.2. Pyenv also opened the door to pyenv‑virtualenv, a conduit that marries per‑interpreter control with venv‑style isolation.

Maya also tested Conda, the renowned cross‑language package manager, which is especially handy for data‑science stacks that demand specific binary wheels. Conda’s create -n ml-env python=3.12 numpy scipy pandas took minutes, folded them in a conda environment, and produced a ready‑for‑deployment bundle that shipped with GPU support out of the box.

More Rooms, More Possibilities

Every new project was a new room. A web server might use venv with Django, a BASH script might run in a cobalt image, while an IoT firmware battle needed a virtualenv with target‑specific toolchains. Creatively compounding these tools, Maya spun a “virtual machine” of her own, Container‑as‑Code, resting on top of Docker. Containers created a docker‑compose.yaml that defined dozens of inter‑linked services, each backed by a tiny virtual environment that had only the right libraries pulled from the relevant PyPI mirror.

Endless Purpose, One Dedicated Space

As seasons passed, the Linux lab became a living catalog of specialties. John’s data‑analysis cabin stored only Big‑Data tools, Ruby‑scripting pods twirled around Ruby‑based CRMs, while the home automation area kept a lightweight asyncio environment alive. The only common link between them was the strict isolation granted by virtual environments, ensuring that an obscure version of requests would never ripple into another project.

The Moral

Maya learned that a vast planet like Linux offers many roads: venv for speed, pyenv for interpreter variety, conda for heavy data science, and containers for truly distributed solutions. When you layer these tools correctly, the sandbox ecosystem becomes almost limitless, like a frontier where every line of code can run in its own sunlit canyon. This narrative, written in the blazing light of Python’s latest releases, reminds every developer that the power of isolation is the key to scaling up without crumbling under dependencies.

© 2020 - 2026 Catbirdlinux.com, All Rights Reserved. Written and curated by WebDev Philip C. Contact, Privacy Policy and Disclosure, XML Sitemap.