As a podcaster, video creator, or voiceover artist, how you sound is a major portion of your brand - what identifies you to those who consume your type of content. Think about it. Can you imagine pulling up the latest stream of Lions Led by Donkeys Podcast hearing a strange voice other than Joe Kassabian? Not only the voice and diction, but also the spectrum and amplitude dynamics are part of the identifiable product. Are any fans of the Nicole Sandler Show reading this? You would probably suspect that something was wrong with the world if you streamed her and heard something other than her somewhat bass boosted voice with a touch of hard clipping. The sound is part of the product, and equalization is a key aspect of the sound.

When discussing audio equalization for podcasters and other creators of voice content, think about it as a process which begins at the microphone and ends at the last stage of amplitude compression. It is not limited to only an EQ stage because microphones have frequency responses which vary from model to model. Also, the response is affected by the directional pattern and proximity effect. When you are closer to the microphone, especially within a few centimeters, the audio is more bassy. Voices are less bassy if off-center and farther away. On the tail end of the signal chain, the use of multiband compression or limiting will have a similar effect as an equalizer on the associated frequency bands.

Spectral Ranges of the Human Voice

The human voice is not one nebulous thing, but actually is a combination of components carrying various sounds (symbols) to convey language. We have two vocal cords which produce the sound in our throats. Our windpipes, mouths, and related bones and soft tissues work together to create the vocal sounds picked up by the microphone. Use you favorite sound editor or spectrogram application to actually look at your voice's spectral components. In Catbird Linux, there are currently two tools for this: Audacity and Audioprism. There are decent tools you can find for Windows and Mac systems too.

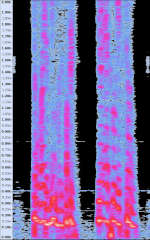

Here is a spectrogram of my voice, taken from my first "Kick the Hornet's Nest" video. Note the distribution of audio energy as I say, "your ass will get stung."

When examined spectrally, the voice has its lowest components somewhere above 100 hz, possibly as high as 200 hz. That fundamental tone has harmonics reaching up through 1000 hz, in proportions giving each person their recognizable sound. Sibilant sounds, which we use for expressing consonants - the "F", "T", "P",or "S" - are broad in spectrum, found in the range from 2000 to 6000 hz. Certain sounds are "plosive" because they involve small blasts of air which can cause an unpleasant pop or boom signal in the mic output.

Equalization is used to adjust the ratios of the various vocal spectral components to improve the clarity, reduce harshness, and give character to the voice. Here is a list of suggestions for working with EQ to find your perfect vocal sound:

- Look at the unmodified spectrum of your voice, in detail, to know where the bottom notes are - the fundamental and first couple of harmonics.

- Know where the upper harmonics fade and give way to the sibilant sounds.

- Set your microphone for its flattest in response to capture all parts of your voice.

- Set your microphone gain (and all later gains) to never go into clipping /overloading.

- Use EQ to reduce sounds below about 80 hz to 100 hz to get rid of rumble noises in your environment.

- If you want a deeper vocal sound, speak close to the mic and / or boost your fundamental note a few dB.

- If the vocals are "muddy" or not so clear, try reducing in the 100 hz to 300 Hz range.

- Boosting vocal components between 2000 hz up to 6000 hz can improve clarity. Look at the spectrum and work with the sibilant sounds.

- If there is to much sibilance, reduce the range from 2000 hz to 6000 hz.

- Be consistent in your speaking technique to maintain your best quality and character.

Equalization is like pepper and salt: best used sparingly. Too much of anything is going to badly affect the overall quality. Likewise, you will rarely get away with zero. Know the voice spectrally and work with those lines and sibilants.

When you have identified the best settings for equalization of your voice, save those settings in your software and write them down in your notes. If you change microphones, your settings should be close to ideal, so start with them on the new mic. Adjust as necessary considering the tonal qualities of the new microphone. Take some spectrograms and actually look at your vocal components, then make the necessary adjustments.

Watch for another posting here, soon, about managing your gain and amplitude dynamics. How you do compression, clipping, and gating is part of your audible identity in your vocal work.

© 2020 - 2026 Catbirdlinux.com, All Rights Reserved. Written and curated by WebDev Philip C. Contact, Privacy Policy and Disclosure, XML Sitemap.